WDTA Releases First Batch of International AI Security Standards to Advance Global Generative AI Development

202-04-22 15:48:00

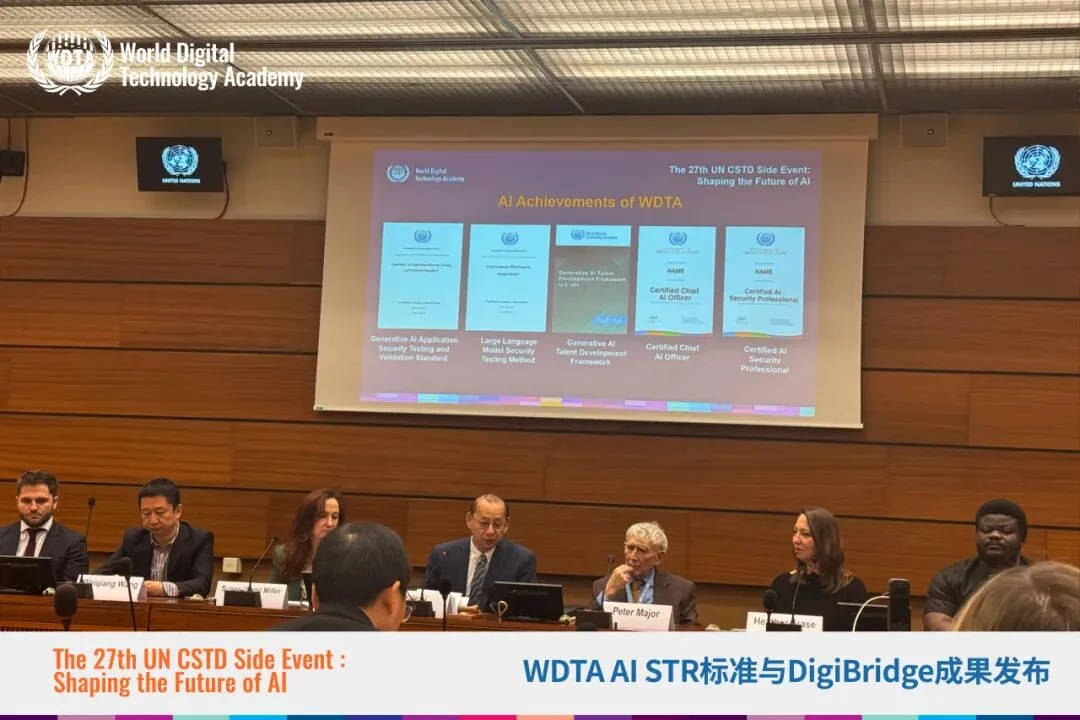

On the 16th, the World Digital Technology Academy (WDTA) released two significant international standards at the Palace of Nations (the seat of the UN Geneva Headquarters): the Generative AI Application Security Testing Standard and the Large Language Model Security Testing Methodology. This marks the first time an international organization has issued international standards in the LLM security field, providing the industry with a unified testing framework. The release of these standards will have a far-reaching impact on the AI sector, driving the safe and reliable development of AI technologies.

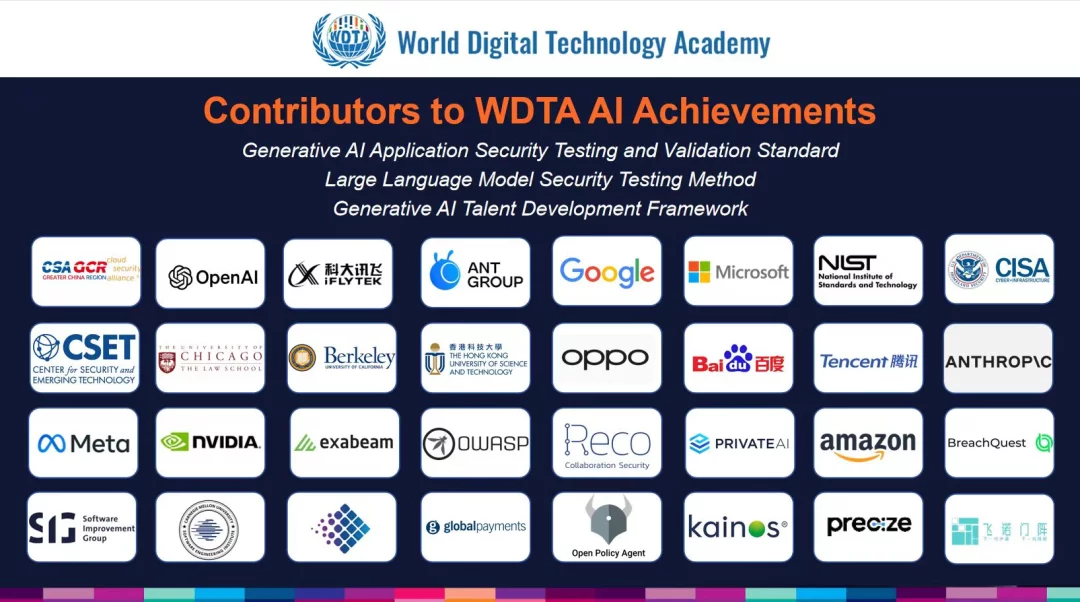

These international standards were unveiled by WDTA at the 27th Session of the United Nations Commission on Science and Technology for Development (CSTD). Compiled by an expert team led by Huang Lianjin (Leader of the WDTA AI Safety, Trust, and Responsibility Working Group, and Vice President of the Research Institute of CSA Greater China), the two standards draw on contributions from over dozens of entities—including CSA Greater China, OpenAI, Ant Group, Google, Microsoft, Amazon, NVIDIA, OPPO, iFLYTEK, Baidu, Tencent, University of California, Berkeley, University of Chicago, and Hong Kong University of Science and Technology. This reflects extensive industry collaboration and collective wisdom.

According to Li Yuhang, Executive Director of WDTA, the Generative AI Application Security Testing Standard provides a framework for testing and verifying the security of generative AI applications—especially those built with large language models. It defines the testing and verification scope for each layer of the AI application architecture, ensuring that all aspects of AI applications undergo rigorous security and compliance assessments.

He noted that the Large Language Model Security Testing Methodology offers a comprehensive, rigorous, and highly practical structural solution for evaluating the security of LLMs themselves. It proposes LLM security risk classification, attack classification/grading methods, and testing approaches; it also pioneered a classification standard for four attack techniques with different intensity levels, along with strict evaluation indicators and testing procedures. These provisions enable developers and organizations to identify and mitigate potential vulnerabilities, ultimately enhancing the security and reliability of AI systems built with LLMs.

Relevant leaders of WDTA stated that as new generative AI technologies and AI application architectures built on generative AI models evolve, these standards will also continue to advance. WDTA emphasized that speed, openness, and inclusivity are the core concepts behind these innovative standards—only by keeping pace with technological development can standards sustain their role in guiding and regulating the industry.

Programs & Initiatives

Events

Research & Publications

Awards & News