PROGRAM & INITIATIVES

Assessment and Certification

Artificial Intelligence Safety, Trust & Responsibility

PROGRAM & INITIATIVES

AI STR Certification

Artificial Intelligence Safety, Trust & Responsibility

Artificial Intelligence Safety, Trust, and Responsibility (AI STR) Certification is a global program created by the World Digital Technology Academy (WDTA) based on the WDTA AI STR international standards. It provides a clear framework for ensuring that AI systems are secure, trustworthy, and responsibly governed.

The certification assesses both technical safeguards and ethical and legal compliance, helping organizations manage risks such as data protection, fairness, and broader societal impact. Achieving AI STR Certification demonstrates a commitment to responsible AI and strengthens trust with users, partners, and regulators. The program supports the global development of AI that is safe, transparent, and aligned with public interest.

AI Application

Evaluating and validating the performance of generative artificial intelligence (GenAI) applications in terms of safety, trust, and accountability

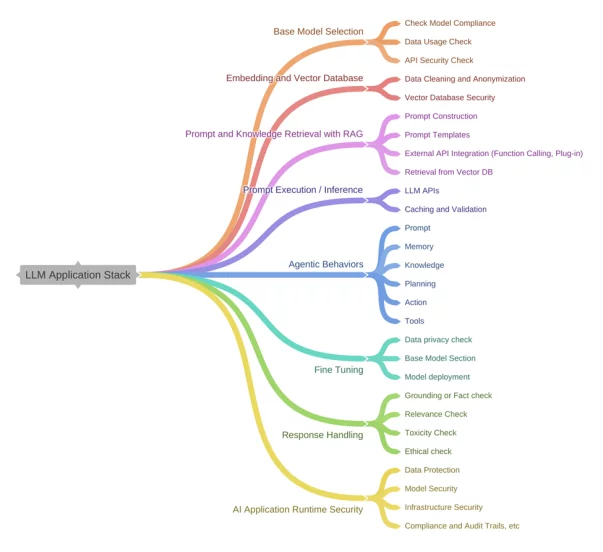

Large Language Model

Establish rigorous security standards and evaluation procedures to ensure large language models resist adversarial attacks, reduce risks, and promote responsible use.

AI Agent

The types of agent risks were further categorized, and testing methods such as model inspection, network communication analysis, and tool fuzz testing were improved and innovatively proposed.

PROGRAM & INITIATIVES

Generative AI Application Security Certification

The Generative AI Application Security Certification is conducted based on the WDTA AI STR-01 standard.

PROGRAM & INITIATIVES

Certification Basis

WDTA AI STR-01 Generative AI Application Security Testing and Validation Standard

Generative AI Application Security Testing and Validation Standard

Target

Applications built on generative artificial intelligence (from LLM to GenAI)

This certification addresses key areas:

PROGRAM & INITIATIVES

Large Language Model Security Certification

Based on the WDTA AI STR-02 Large Language Model Security Testing Method

PROGRAM & INITIATIVES

Certification Basis

WDTA AI STR-02 Large Language Model Security Testing Method

Target

Large Language Models

Large Language Model Security Testing Method

PROGRAM & INITIATIVES

Value of LLM Security Certification

Enhancing Security and Reliability

Through rigorous security testing and evaluation, enterprises can identify and repair potential vulnerabilities in large language models, significantly improving the overall security and reliability of the models and reducing the risk of adversarial attacks.

Reducing Operational Risks

Through systematic security detection and protective measures, enterprises can effectively reduce operational risks caused by model security issues, avoiding potential economic losses and brand damage.

Promoting Responsible Use

Helps organizations consider social impact and ethical issues more thoroughly when designing and deploying AI systems, thereby promoting the responsible use of AI technology.